“Without big data, you are blind and deaf and in the middle of a freeway.” — Geoffrey Moore.

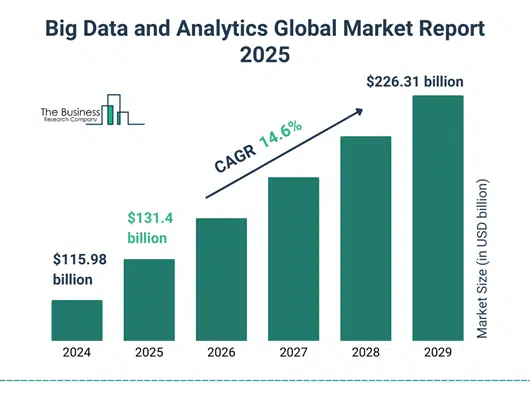

Big data is rapidly pushing its boundaries and getting bigger. Whether it’s healthcare, banking, education, entertainment, travel, or any other industry, big data databases are widely used. The volume of global data is climbing at an incredible rate, with the big data global market. A recent figure confirms the big data market growth from $115.98 billion to $226.31 billion by 2029.

Big data database open source is an affordable option for storing, managing, and analyzing data. Let us discuss in detail the big data and have an unbiased comparison between the top 7 big data databases.

What is Big Data?

To understand it in simple language, we can say that Big Data is a collection of data that is complex, larger than traditional data, and yet growing exponentially with time. It is bigger than it is beyond the capability of traditional data management software to handle, store, or process it. Hence, we need a different system and data warehousing solutions to manage it. Here comes the Big Data Database Management System.

Types of Big Data

There are multiple types of big data databases. It is critical to understand the fundamental groups of big data to select an adequate response among any big data database list and to fit the requirements of the organization:

1. Structured Data

Structured data is very much organized, and when in relational databases, they are arranged in rows and columns. It is organized in a very strict schema, and is hence simple to search and analyze using SQL queries. This data type is suitable in the case of conventional transactional systems and accounts.

- Examples: Bank, spreadsheet, inventory tables.

- Suitable databases for big data: Traditional relational databases that support big data, including MySQL and PostgreSQL, and big data databases that can support big data and structured data.

2. Semi-Structured Data

Partially structured data combines the qualities of structured data with those of unstructured data. Although it is not a rigidly defined schema, it can be said that it has organizational features such as tags or markers to distinguish semantics.

- Example: JSON documents, XML, log files, emails.

- Big data NoSQL databases: Couchbase and MongoDB, are rich in terms of scalability and flexibility when it comes to storing and querying this hybrid data type.

3. Unstructured Data

Unstructured data makes up the greatest and most rapidly expanding category and can refer to any content that is unstructured or otherwise does not have a schema. These include text files, multimedia files, social media, and Internet of Things data streams.

- Examples: Videos, images, audio files, tweets, and content on a website.

- Big data NoSQL databases: Apache Cassandra, Apache HBase, and Amazon DynamoDB, which figure on both the current lists of top big data databases, because they deal with extremely large and rapidly expanding volumes of highly diverse data.

Let’s find out how a traditional database is different from big data below:

| S. No. | Traditional Data | Big Data |

| 1. | A small volume of collected data, using traditional methods for data analysis. | Huge volume of collected data that can’t be analyzed using traditional methods. |

| 2. | They are structured data saved in spreadsheets, databases, etc. | It includes semi-structured, unstructured, and structured data. |

| 3. | It often supports manual data collection. | It supports digital data collection using automated systems. |

| 4. | It mostly comes from internal systems. | It comes from sources like mobile devices, social media, etc. |

| 5. | It includes data like customer data, money transactions, etc. | It includes data such as videos, images, etc. |

| 6. | Primary statistical methods can be used for data analysis. | Data analysis needs advanced analytics methods like ML, data mining, etc. |

| 7. | Methods used to analyze data are comparatively slow and gradual. | Big data analysis methods are fast and instant. |

| 8. | It generates data after the event ends. | It generates data every second. |

| 9. | It is processed in batches/groups. | It is processed and developed in real-time. |

| 10 | It has limited values and insights. | It offers valuable insights and patterns. |

It is considered to be one of the most critical revolutions that modernized the business process by offering numerous other benefits. Enhance decision-making, ensure cost savings, effective supply chain management, and fraud detection through database technologies. Moreover, you can drive your business profit as it offers seamless risk management, mathematical optimization, and predictive analytics.

Some of the most popular big data database examples are Apache Cassandra, MongoDB, Apache HBase, Google BigQuery, Amazon Redshift, and Snowflake.

What are Datasets?

A dataset is a group of organized data and stored together for processing. Usually, the information in a dataset is gathered from a single source or is meant for a particular project and is connected in some way. A dataset could, for instance, include a collection of company data (sales numbers, client contact details, transactions, etc.).

The dataset comprises various data types such as text, photos, audio recordings, and numeral values. You can access the dataset’s content in any way you want, whether separately, combined, or as a single unit. data kinds, such as text, photos, audio recordings, and numerical values, can be included in a dataset. Usually, a dataset’s contents can be accessed separately, in combination, or as a single unit as per your requirement.

Datasets act as a key component of data analytics, data analysis, and machine learning (ML). It supplies the information from which data analysts easily derive patterns and insights. To ensure effective training and implementing an ML model, the critical step is to choose the best big data dataset for a project.

Also Read: REST vs RESTFUL APIs

Big Data Architecture

Big data architecture refers to the structure that helps in the efficient ingestion of data, its processing, storage, management, and analysis of very large and heterogeneous datasets that other systems cannot handle. It is the kind of architecture that is needed in any organization involved with big data databases that wants to derive insights and capabilities from large data.

Source: learn.microsoft.com

The architecture generally consists of multiple layers, each performing specific roles to manage the flow and transformation of data:

1. Data Sources Layer

This layer contains the whole data source, including structured, unstructured, and semi-structured data, which includes database transactional databases, IoT sensors, social media, log files, and streaming platforms. The data collection may be of batch type or real-time. The knowledge of these sources is essential in knowing the appropriate databases for big data processing.

2. Data Ingestion Layer

Also referred to as the ingestion/raw layer, this purpose is to gather data that is found in a range of sources, and it introduces this data into the system. The ingestion process accommodates multitudes of data types and speeds and preserves the data in its original form, and does not modify the data. The existing tools and technologies at this phase make the data flow into big data NoSQL databases or data lakes without a hitch.

3. Data Storage Layer

In this step, the information analysed is efficiently stored to be processed further and analysed. The storage mechanism should be flexible and scalable, and usually make use of distributed file systems or big data databases such as Hadoop, Cassandra, or cloud-based warehouses such as Amazon Redshift. The selection of the most appropriate and best databases for big data is determined by the volume, kind, and speed of the data received.

4. Data Processing Layer

Data is prepared, transformed, and cleaned up to become analytics-ready. This phase consists of batch processing or streaming processing real-time depending on the requirements of the use case. Large numbers of big data databases allow connecting to processing frameworks such as Apache Spark or Flink to enable a scalable computation.

5. Data Analytics Layer

In this case, data is mined to provide actionable insights in the form of analytical models and machine learning algorithms. BI platforms and visualization tools use processed data to produce dashboards and reports. It is important to choose the best and powerful big data database that has higher support for the analytics product that can provide efficient data insight.

6. Data Consumption Layer

The last level provides findings to end-users, applications, or automated work processes. Decision-making is performed with the help of business intelligence systems, dashboards, and operational applications that access this layer. Fast and dependable big data NoSQL databases assist in giving real-time access to information.

Choosing the right components, including the appropriate big data databases list, ensures scalability, flexibility, and performance necessary for modern data-driven enterprises.

Features of Big Data Databases

Big data and NoSQL databases are specifically targeted at dealing with the special problems of the large, fast, and varied data sets. The major characteristics that make the use of these big data databases different from the use of traditional databases are the features that help organisations to handle and derive value out of large amounts of data effectively.

1. Scalability

A key attribute of big data databases is that they are horizontally scalable to a large number of commodity servers or cloud instances. This guarantees they to support the increasing data growth without a performance decline, which is very important in selecting the most appropriate databases for big data.

2. Distributed Storage and Processing

Data is processed and stored in groups of machines, which makes parallelism and failover possible. This kind of distribution offers high availability, fault tolerance, and reduced processing time, as other big data NoSQL databases like Apache Cassandra, MongoDB, and Amazon DynamoDB are usually typified.

3. Flexible Schema Designs

In contrast to traditional relational databases, big data and NoSQL databases allow dynamic schemas or a schema-less type of design, which is mandatory to interact with types of big data databases that contain both structured and unstructured data. Their flexibility makes them suitable for the current data applications that have dynamic data formats and sources.

4. High Throughput and Low Latency

The nature of these databases is that they are optimized to perform heavy reads and writes with limited latency, which allows them to do real-time analytics, stream data ingestion, and responsive applications. This is a great advantage when this solution is deployed through the use of the best big data databases in a situation that demands fast insights.

5. Multi-Model Support

The most advanced big data databases can accommodate different types of data models, such as key-value, document, wide-column, and graph, in a single platform. Such flexibility will enable companies to choose the most appropriate form in the list of big data databases to address various application demands.

Selecting the right combination of big data and NoSQL databases based on these features is pivotal to leveraging the full potential of big data technologies.

Factors To Be Considered While Choosing An Ideal Database Management System (DBMS)

We cannot deny the fact that it is the basic step that decides the relevance of everything done after that- choosing the right database management system. It is critical as it provides efficient data management, improves decision-making through data analysis, and ensures data integrity and safety. Still, confused about which tool is suitable for your business? No problem. Let us dig out the factors that affect the selection of the best DBMS for your business below:

1. Data Requirements and Scalability

So, here comes the primary factor to be considered while deciding the big data database tool for your company. We cannot deny that data type, complexity, and data volume play a vital role if you are looking for ideal database technologies. An organization will seek a big data database management system to control structured, semi-structured, or unstructured data. Additionally, different big data DBMSs offer distinctive types of data models, including document-based, object-oriented, network, relational, and hierarchical.

Hence, don’t forget to access the size and growth rate of the data before moving forward to select a data model. You cannot overlook the scalability element either. The database must therefore effectively manage both present and future business requirements. For businesses that anticipate substantial data volumes in the future or quick expansion, the scalability element is essential.

2. Agility and Functionality

You can get maximum application agility through an operational, analytical database with a suitable model platform and flexible schema. It is important to consider the data functionality like:

- Data integrity

- Data security

- Backup and recovery

- Concurrency

- Consistency

- Manipulation and query

- Analysis and reporting

Data security features don’t escape from safeguarding against unwanted access or editing, data integrity confirms the consistency and correctness of data. Moreover, tools basically used for data retrieving and data backup permit data production. The data produced can be recovered if data corruption or loss happens.

Data concurrency and data consistency ensures that concurrent queries are managed rightly while data manipulation and query allows the actions- insertion, deletion, updation, or selection. You cannot afford to overlook these things if you desire to choose big data technologies that are perfect for your enterprise.

3. Data Security

Every database management system has a unique method for safeguarding data. The data structure determines the data protection strategies, which should be carefully taken into account while selecting a database management system. Strong security will support compliance with national and international data safety and protection laws.

Make sure the database has cutting-edge security features to safeguard the data in the event of loss or theft. More significantly, the management of identity and access is one of the factors that is frequently disregarded when selecting a DBMS.

In order to prevent users from having more access than necessary, access should be sufficiently fine-grained to allow for the easy restriction of certain data objects. The capacity to recover from a security breach and data privacy and protection are ultimately essential components of any system.

4. Cost, Compatibility, and Usability

It should not happen that after considering all the above-mentioned features, the big data database tool goes beyond your company’s budget. The cost should also be taken into consideration while looking into other crucial factors. Businesses must estimate the amount of various DBMSs, including maintenance, hardware, training, support, licensing fees, and many more. Costs depend on the DBMS provider, their brand value, technical support, customer service, and documentation.

The compatibility of the DBMS with the company’s operating system, hardware, network, application framework, and user interface must be verified. Examine the DBMS’s ability to exchange data and communicate with external or other data sources as well.

Think about how easy it will be for the teams utilizing the system to use it in addition to compatibility. A lot of technologies provide an intuitive working technique by allowing drag-and-drop execution. Database developers, IT staff, marketing specialists, and other employees of the business are all significantly impacted by the usability issue. Therefore, selecting a DBMS that each team can effectively use and manage is crucial.

Good Read: Cost to Develop an App

Top 7 Big Data Technologies

Let us now talk about the list of big data databases to get a clear picture of which technology would be suitable for your organization.

1. Apache Cassandra

Apache Cassandra is an open-source NoSQL database to manage huge amounts of data. It can handle any kind of data, whether it is structured, semi-structured, or unstructured.

Apache Cassandra was created at Facebook to facilitate inbox search functionality. It was made available publicly in 2008 and emerged as the top-used big data database tool by 2010.

Source: Apache CASSANDRA

Pros

- Has good scalability and supports huge data sets over nodes.

- Distribution, masterless internal design, fault tolerance, and high availability.

Cons

- It has a steep learning curve and requires high expertise in setting up and managing.

- Bulky to tune and update; it may incur high maintenance costs even though it has no license costs.

Price: Free and open-source. No licensing fees. Commercial support may incur costs.

2. Apache HBase

Apache HBase was built on the top of the Hadoop ecosystem. It is a distributed, column-oriented NoSQL database. It is especially well-suited for real-time read-and-write operations and is made for sparse, large-scale data sets. HBase is an ideal big data database tool for applications that require high-throughput data access. The reason is its scalability and fault tolerance.

HBase is a good choice for applications needing high-throughput data access because of its scalability and fault tolerance. One of the most common uses of HBase is for applications like time-series databases, social media platforms, and sensor data storage that requires read-and-write access to massive data.

Source: Apache HBASE

Pros

- Can handle and store very large datasets efficiently on top of HDFS.

- Highly scalable, with easy addition of new nodes to expand the system.

Cons

- No built-in authentication/permission system.

- Default indexing is limited; indexing requires manual scripting.

Price: Free and open-source. Costs mainly arise from infrastructure, support, and maintenance.

3. MongoDB

The MongoDB big data dataset is popular for its adaptability, user-friendliness, and scalability. Unlike conventional relational databases, it offers a more flexible and natural data format by storing data as documents that resemble JSON.

Applications for content management, the Internet of Things, and real-time analytics can benefit from MongoDB’s ability to manage massive amounts of unstructured and semi-structured data. High performance and intricate data interactions are supported by its sophisticated query language and horizontal scalability capability. Hire remote MongoDB developers to drive your business operations and decision-making process.

Source: MongoDB

Pros

- Easy manipulation of structured and unstructured data and schemas that are easy to design.

- Horizontal scaling (across a cluster of servers, sharding) of big data and heavy traffic.

Cons

- Managing large deployments can be complex.

- Document size limit (16MB per document).

Price: Free (open source Community Edition). Enterprise and advanced features require a paid license.

4. Amazon DynamoDB

DynamoDB is useful for handling any volume of traffic and enables users to build databases that can store and retrieve any quantity of data. By automatically distributing data and traffic across servers, it offers great performance and dynamically manages each customer’s requirements. It is a fully managed NoSQL database service that is scalable, quick, and predictable in terms of performance.

Because they don’t have to worry about cluster scaling, software patching, or hardware provisioning, users are relieved of the administrative hassles associated with running and growing a distributed database. By offering encryption at REST, it also removes the complexity and operational load associated with safeguarding sensitive data.

Source: Amazon DynamoDB

Pros

- It is fully managed and serverless, and there is no requirement for manual cluster management.

- Encryption and mixed security functions.

Cons

- The way of pricing is complicated and may be unpredictable in unstable consumption.

- Weak richness of querying, no complete SQL-like joins or multi-level transactional power.

Price: Pay-as-you-go. Reads and writes, data storage, and feature charges. On-demand and provisioning capabilities. Free tier with limited usage; their pricing is highly dependent on the amount of usage.

5. Google BigTable

Terabytes or even petabytes of data can be stored in Google Cloud BigTable. Google BigTable is referred to as a sparsely populated table used to scale billions of rows and thousands of columns. The row key is the lone index value appearing in every row, also known as the row value.

Low-latency storage for massive single-keyed data is made feasible by Google Cloud BigTable. It allows for high read and write throughput with low latency, making it the ideal data source for MapReduce processes.

Numerous client libraries, such as a supported Java extension for the Apache HBase library, allow applications to use Google Cloud BigTable. As a result, it works with the Apache ecosystem of open-source big data applications.

Pros

- Fully managed service with integration into the Google Cloud ecosystem.

- Automatic scaling and strong data compression.

Cons

- Limited support for complex querying; optimized for single-row access.

- Security features could be improved.

Price: Number of provisioned nodes, storage (SSD/HDD), and bandwidth based on pay-as-you-go. E.g., 0.65/hour per node on-demand SSD storage.

6. Apache Hive

An ETL and data warehousing tool, Apache Hive offers a SQL-like user interface to the Hadoop distributed file system (HDFS), which combines Hadoop. Hadoop serves as its foundation. This software project offers data analysis and queries. It makes it easier to read, write, and manage large datasets that are stored in distributed storage and queried using the syntax of Structured Query Language (SQL).

Hive, which offers basic SQL capability for analytics, was first created by Facebook, Amazon, and Netflix. To run SQL applications and SQL searches across distributed data, conventional SQL queries are built in the MapReduce Java API. Since the majority of data warehousing applications use SQL-based query languages, such as NoSQL, Hive offers mobility.

Source: ApacheHive

Pros

- Very low cost when used on a large-scale batchwise processing.

- Useful in the Hadoop ecosystem to conduct ETL, data warehousing, and business analytics.

Cons

- Not intended for real-time or OLTP applications; batch only.

- High latency compared to modern dedicated query engines.

Price: Free and open-source. Costs primarily include infrastructure and deployment-related costs.

7. ClickHouse

Yandex developed ClickHouse, an open-source columnar database management solution, in 2016. In order to give customers an effective and instant way to conduct complex analytical queries on a huge volume of data, the idea of creating ClickHouse was first hit.

Enterprises use ClickHouse for analytical processing, business intelligence, and data warehousing. Due to its capability to analyze huge data rapidly and efficiently, it gained popularity in various industries like e-commerce, healthcare, IoT, media, gaming, etc.

Source: ClickHouse

Pros

- These storage features are efficient, and the data is highly compressed, thereby cutting down on the underlying storage prices.

- Supports scalable distributed processing and analytics in real time.

Cons

- Documentation and third-party tool integration could be better.

- Not ideal for streaming or highly mutable data.

Price: Self-hosted organizational text editor, which is free and open source. The costs of managed cloud service are on a pay-as-you-go basis, e.g., roughly, $160/month for a small managed cluster or as high as $2,500/month for clusters used by enterprises. Costs are a factor of compute, storage, and active hours usage.

A clear comparison between the seven big data database technologies is shown below:

| Technology | Type | Best used for | Used by | Benefits |

| Apache Cassandra | NoSQL, Column-family database | High availability and scalability | Facebook, Netflix, Twitter |

|

| Apache HBase | NoSQL, Columnar Database | Real-time analytics on big data | LinkedIn, Adobe, Pinterest |

|

| MongoDB | NoSQL, Document Database | Flexible & scalable JSON-like storage | Uber, eBay, MetLife |

|

| Amazon DynamoDB | NoSQL, Key-value & document store | Fully managed, serverless, data processing | AWS services, Disney, Airbnb |

|

| Google Bigtable | NoSQL, Wide-column store | High-throughput & low-latency operations. | Google Analytics, YouTube, Spotify |

|

| Apache Hive | SQL-based Data warehouse on Hadoop | Structured Querying on Big Data | Facebook, Netflix, Amazon |

|

| ClickHouse | Column-oriented database | High-speed OLAP & Real-time analytics | Cloudflare, Yandex, eBay |

|

Emerging Technologies Influencing Big Data Database

As big data evolves, the future technology that is continuously emerging like edge computing, blockchain, and quantum computing are transforming the processing and management of data. Here is how future technologies are contributing to the global transformation:

Here’s how each of these innovations is playing a transformative role.

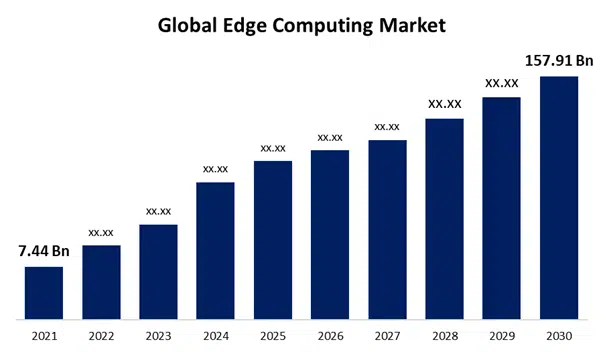

1. Edge Computing

By processing data closer to its source, edge computing lowers latency and enhances real-time analytics. Applications that require instant data processing must use this technology to avoid the delays brought on by a centralized cloud infrastructure.

Use Case: To cut downtime and enhance maintenance plans, General Electric (GE) uses edge computing to track jet engine performance in real-time. Instead of moving the data to a common or centralized place, GE can enhance operational efficiency and avoid expensive equipment breakdowns by processing data locally.

The market for edge computing is expected to reach $157.91 billion by 2030, and its contribution to real-time analytics will ultimately grow.

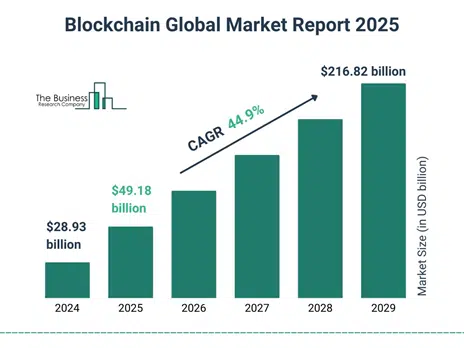

2. Blockchain Integration

Blockchain is basically an ideal technology for companies that seek verifiable and unchangeable data records as it offers a transparent and safe means of maintaining and exchanging data.

Use Case: By using blockchain technology to track its food supply chain, Walmart is able to overcome food waste and promptly identify issues. Walmart has improved sustainability and food safety by reducing food waste by 20% by tracking every product from farm to store.

The blockchain global market size has reflected exponential growth in recent years. It will grow from $28.93 billion in 2024 to $49.18 billion in 2025 at a CAGR of 70.0%.

Source: thebusinessresearchcompany

3. Quantum Computing

By processing complicated datasets at previously unheard-of speeds and resolving issues that conventional computers find difficult to handle, quantum computing will revolutionize big data analysis.

Use Case: Algorithms being developed by IBM’s Quantum Computing division have the potential to greatly speed up drug discovery. According to early tests, quantum computing could reduce analysis times from years to months, accelerating and lowering the cost of medical advancements.

It will grow from $2.57 billion in 2024 to $3.62 billion in 2025 at a CAGR of 40.9%.

5 Big Data Challenges and Solutions

The prominent challenges of big data fall on technological, operational, and company limitations. So, let’s move ahead with the challenges faced during big data implementation along with the solutions to escape from them. Organizations seeking big data database solutions need to understand the hurdles that

1. Unmanageable volume

Problem

As the name clearly describes, big data is capable of managing large volumes of data, whether it is in terabytes or exabytes of data. Handling this amount of data without adequate architecture, desired infrastructure, and computing power becomes strenuous. It is more obvious that you missed the opportunity to get the required value from the data assets.

How to resolve it?

So, what’s the solution? No worries. We will tell you what you have to do to overcome this challenge. Use storage technologies in order to address the continuously climbing volume and scalability issues with big data datasets.

Whether you select a cloud, on-premise hosting, or a blended approach, kindly ensure that your choice doesn’t compromise your business needs and future goals. Let us understand this through an example. On-premises are unable to scale instantly. So, you need to work on your infrastructure and extend your admin team.

If you want to keep all the data collected to yourself, then it’s a perfect choice for you. On the other hand, cloud solutions offer you flexibility and an ideal management system for high-volume data. Develop a scalable architecture and tools that can be modified to fulfill the requirement of continuously growing data without compromising its unification.

2. Deal with numerous formats

Problem

Sometimes we don’t realize that the majority of the data that we have is unstructured. You can easily find this out: if the data doesn’t fit in a database table (emails, customer reviews, videos, or in other formats), then it is unstructured or semi-structured. It discovers another challenge of finding a way to bring heterogeneous data to a format that doesn’t compromise your business needs. It should also match the requirements of the tools down the pipeline for analytics, visualization, predictions, and many more that you use.

How to resolve it?

Here comes the solution to overcome this problem. You need to figure out the use of modern data processing technologies and tools to restyle unstructured data and extract insights from it.

Moreover, if you are handling multiple-format data, you might need to merge different tools to parse data and derive the information you require. Use or develop your own applications to automate and expedite the process of turning unstructured data into insightful knowledge.

The decision will be based on the specific needs of your company as well as the type and source of your data. Use GenAI’s capabilities to read, comprehend, and extrapolate meaning from unstructured data.

3. Multiple sources and integration challenges

Problem

Are you sure about the relevancy of the data you are adding to your storage? Do you still agree that the more we collect the data, the better result we extract? Well, we need to understand that not every data is accountable. Sometimes, it is straightaway irrelevant data that adds no value until you know to use it for combined analysis. One of the most prominent hurdles of big data use cases is the integration of diverse types of data and creating touch points that give insights. The first step is figuring out when combining data from various sources makes sense.

For example, you must gather evaluations, performance, sales, and other pertinent data for collaborative analysis if you wish to obtain a 360-degree view of the customer experience. Second, you must set up a location and a set of tools for combining and getting this data ready for analysis.

How to resolve it?

Create an inventory to determine the sources of your data and whether integrating them for collaborative analysis makes sense. Since business people are the ones who comprehend the context and determine what data they require to effectively accomplish their business intelligence objectives, this is primarily a business intelligence activity.

To prepare data for big data analytics by collecting data from multiple sources, use data integration tools. Several solutions can be considered such as Microsoft, SAP, Oracle or tailored data integration methods- Precisely or Qlik. To combine disparate data into centralized data sets, take advantage of new AI technology.

Must Read: How to Develop a Bitcoin and Crypto Wallet App?

4. High infrastructural costs

Problem

One of the main obstacles preventing businesses from leveraging their data is a limited budget. Implementing big data database solutions is costly. It includes substantial initial expenses that might not be recouped right once and calls for cautious preparation.

Furthermore, the infrastructure expands in tandem with the exponential growth in data volume. It could become too simple to lose track of your assets and the expenses associated with managing them at some time. According to Flexera, over 80% of IT workers acknowledge that controlling cloud expenses is their biggest obstacle.

How to resolve it?

You can solve many cost challenges for big data database solutions by continuously monitoring your infrastructure. Effective DevOps and DataOps practices help keep tabs on the services and resources you use to store and manage data, identify cost-saving opportunities, and balance scalability expenses. Consider costs early on when creating your data processing pipeline.

Do you have duplicate data in different silos that doubles your expenses? Can you tier your data based on business value to optimize its management costs? Do you have data archiving and forgetting practices? Answering these questions can help you create a sound strategy and save a ton of money.

Choose affordable tools and hosting that meet your spending limit. The variety of big data solutions is always growing, giving you the freedom to select and combine various tools to suit your requirements and budget.

Since the majority of cloud-based services are pay-as-you-go, your costs will be directly correlated with the services and processing power you utilize. In the long term, on-premises hosting turns out to be a more economical option. Additionally, there are hybrid and multi-cloud approaches that mitigate the drawbacks of both on-premises and cloud-only approaches.

Bonus Read: Top AI Trends

5. Shortcomings of big data in-house and market talent

Problem

One of the most difficult and costly problems in big data is the lack of skills. for two reasons. First, finding suitable tech staff for a project is becoming more difficult.

Data scientists, engineers, and analysts are already in greater demand than there is supply. Second, as more businesses invest in big data initiatives and vie for the best talent available, the demand for specialists will increase dramatically soon.

How to resolve it?

Collaborating with a seasoned and trustworthy tech supplier who can readily bridge the gap for your big data and BI requirements is the easiest—and possibly fastest—way to address the issue of a skills shortage. If hiring someone in-house is too expensive, you might be able to save money by outsourcing your job.

You and your team are the only ones who truly understand your data. To retain the talent in-house, think about retraining and upskilling your present staff to acquire the requisite competency. Enhance your workforce’s abilities with GenAI solutions to manage data-driven jobs and maximize performance.

Provide analytics and visualization tools that your organization’s non-technical staff can use. Make it simple for your staff to gather information and use it to inform decisions. You can hire database developers for big database management, if available in your budget.

Optimize performance, ensure data integrity, and scale seamlessly—hire an expert today!

The Bottom Line

While summarizing the blog, we can say that big data database technology has revolutionized business operations and offers valuable insights. You can clinch better decision-making and innovation while choosing the best database for billions of records.

Big data databases drive business operations by helping companies manage big data by using tools like Hadoop, Apache Spark, and machine learning algorithms. It unlocks designs and boosts user experience.

Frequently Asked Questions

1. What are the benefits of big data databases?

Big data provides critical business insights to facilitate decision-making, drive innovation, and make business processes more effective. It offers a way to analyze customer behavior, enhance customization, predict future trends, and automate business operations that intend to save time.

You can easily pick out what is best for your business as per your specific requirements from the big data database list. With the use of big data technology, companies acquire a competitive edge, boost efficiency, and open up the window to exploit various ways or offers to ensure better performance and profitability in many industries.

2. What are big data technologies?

An assortment of tools and frameworks for processing, saving, and analyzing big data datasets are incorporated into big data technology. Some of the big data database examples are: Hadoop, Apache Spark, Apache Flink, and NoSQL databases such as MongoDB and Cassandra.

Companies can handle large volumes of data by using these big database tools. Additionally, it is helpful in carrying out analytics such as machine learning and predictive modeling that help them to pull out useful information from complex datasets.

3. What are the various types of big data databases?

The categories under which databases can be divided are:

1. Hierarchical database

2. Network databas

3. Object-oriented database

4. Relational database

5. NoSQL database

Each type of database is developed to cater to unique requirements from managing structured data to handling large-scale, unstructured data.

4. What is an open-source big data database?

An open source Big Data database refers to a system that is particularly created to handle huge volumes of complex data. Here, the source code is visible publicly and anyone can access, modify, and distribute it. Moreover, it is a strong database to manage large datasets that are built and maintained by a collaborative community. It also offers cost-effective solutions that are helpful for data analysis.

5. Is big data processed using relational databases?

Not all structured big data can be handled the same way as relational databases; traditional, structurally organized RDBMS tends to have issues with big data volume, velocity, and variety. Due to this, big data can be better managed through distributed systems and NoSQL databases that are built to be more scalable and flexible.

6. What are the non-relational databases that often arise from big data called?

Databases that are used in big data environments are popularly known as NoSQL databases. Such options are document-based (e.g., MongoDB), key-value stores (e.g., Redis), wide-column stores (e.g., Cassandra), and graph databases (e.g., Neo4j), and can achieve significant scalability and high performance of unstructured or semi-structured data.